I read an artice talking about how tvs, vcrs, etc. draw current while turned off, even if they don’t have a clock or other type of display. They said most homes are using electricity equivalent to running a 90w bulb 24/7 for turned off electronic equipment. I’ve heard of this before, but have never seen an explanation of how or why a device draws electricity when it’s not doing anything. can anyone explain this to a simple man?

Discussion Forum

Discussion Forum

Up Next

Video Shorts

Featured Story

The IQ Vise has angled jaws, a simple locking mechanism, and solid holding power.

Featured Video

SawStop's Portable Tablesaw is Bigger and Better Than BeforeHighlights

"I have learned so much thanks to the searchable articles on the FHB website. I can confidently say that I expect to be a life-long subscriber." - M.K.

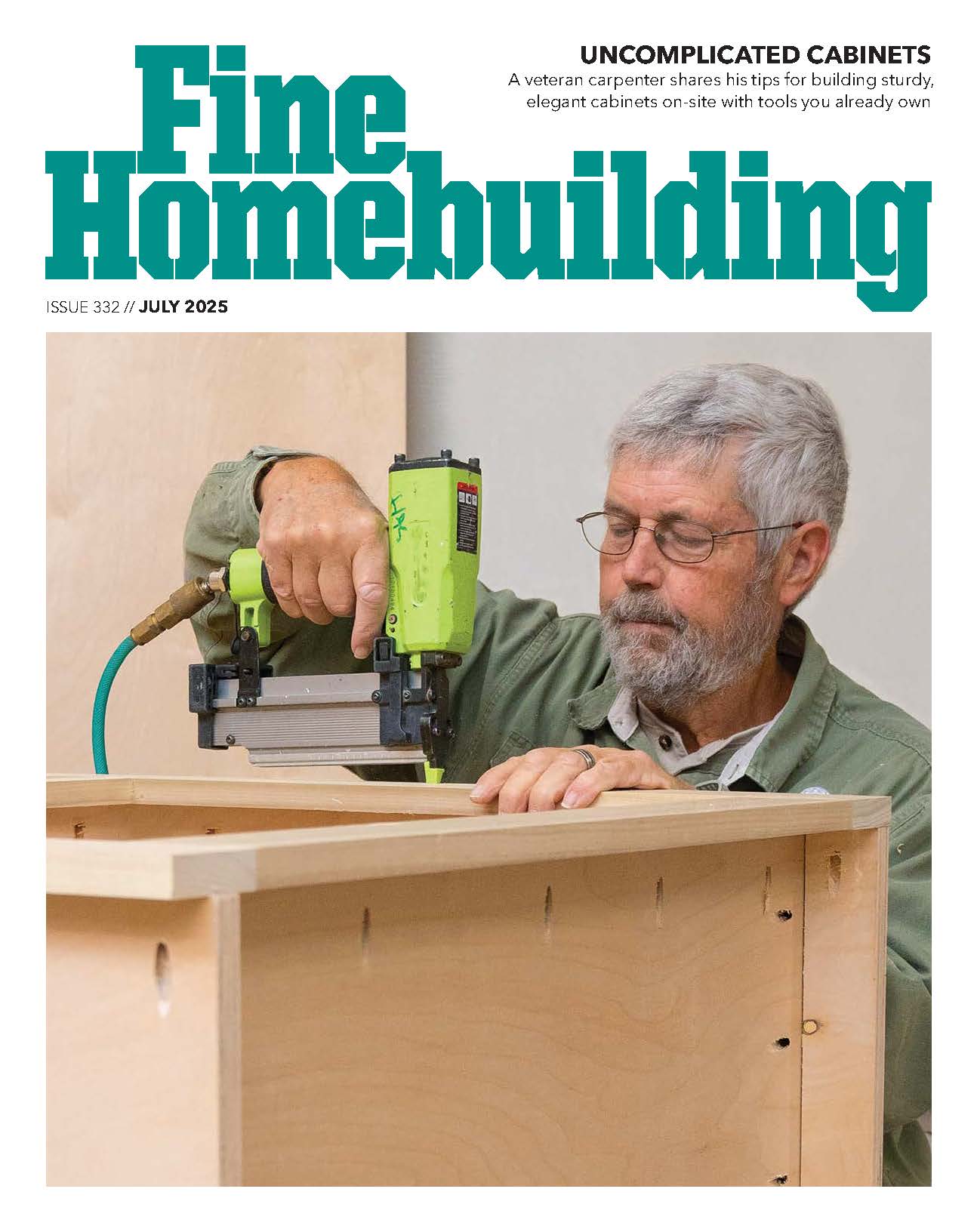

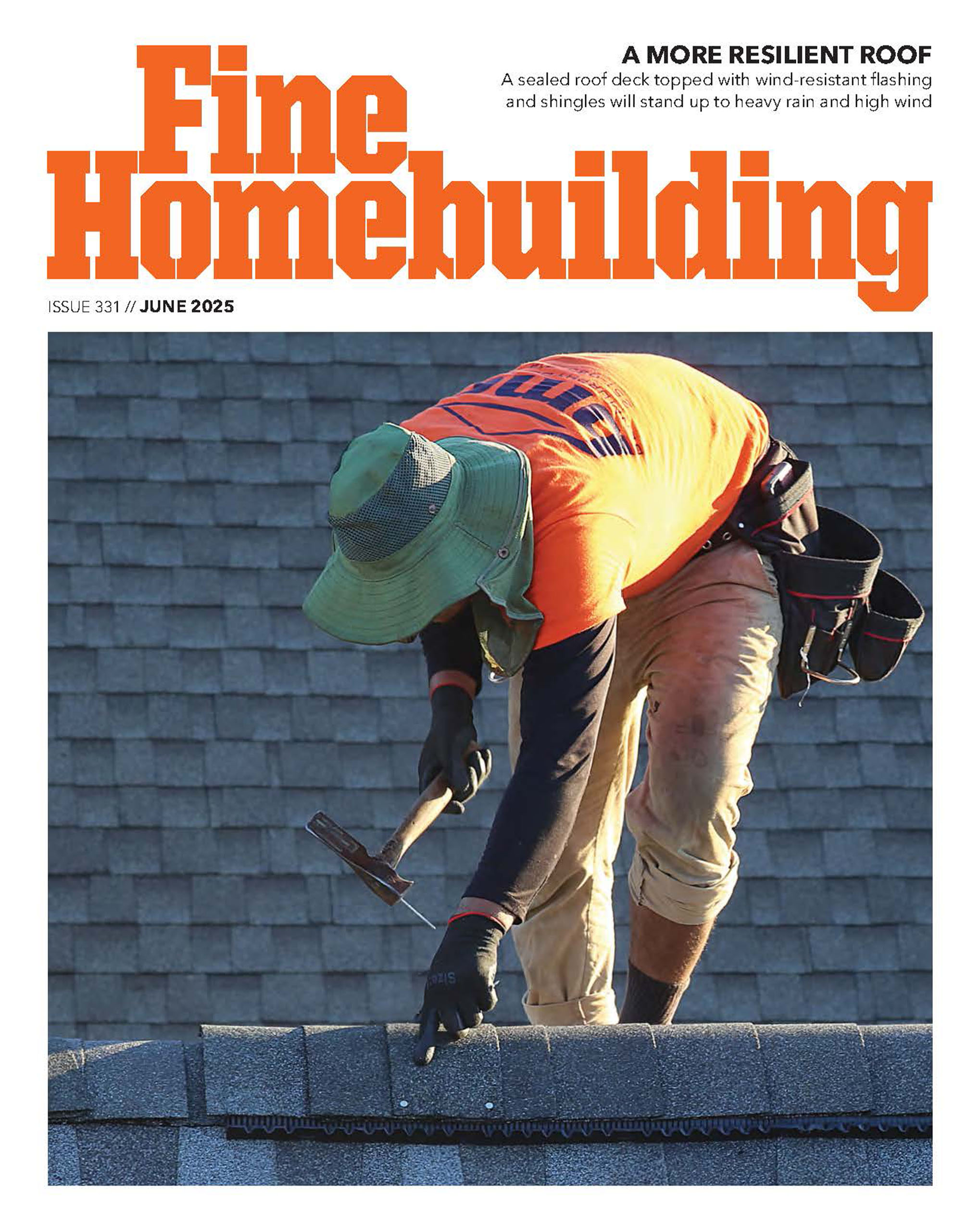

Fine Homebuilding Magazine

- Home Group

- Antique Trader

- Arts & Crafts Homes

- Bank Note Reporter

- Cabin Life

- Cuisine at Home

- Fine Gardening

- Fine Woodworking

- Green Building Advisor

- Garden Gate

- Horticulture

- Keep Craft Alive

- Log Home Living

- Military Trader/Vehicles

- Numismatic News

- Numismaster

- Old Cars Weekly

- Old House Journal

- Period Homes

- Popular Woodworking

- Script

- ShopNotes

- Sports Collectors Digest

- Threads

- Timber Home Living

- Traditional Building

- Woodsmith

- World Coin News

- Writer's Digest

Replies

http://www.aceee.org/pubs/a981.htm

http://prod031.sandi.net/energy/pdf/StandbyEnergyWaste1.pdf

Stacy's mom has got it going on.

Most of the memory to store station presents and other preferences is, long term, powered from the AC line. There is some kind of short term CMOS battery to 'bridge' short power failures, but if you cut off the AC for hours at a crack, a lot of functions are going to return to a default value once the memory dies.

have never seen an explanation of how or why a device draws electricity when it's not doing anything. can anyone explain this to a simple man?

You got a remote control for that TV? Does the TV come on when you hit the 'power' button? Well, in order for the TV to receive the remote's power signal, it has to have something running to detect the signal.

Your computer will continue to draw a little so that he can keep the battery charged and keep the clock running. VCR has the clock and any personal settings that get erased when the power is off.

If you've got a fancy cable/satellite receiver, they're pulling all kinds of info while 'off'. Dish Network doesn't hide the fact that their receivers often pull in updates while 'off'.

If you don't want the stuff drawing, you'd have to put them on power strips and turn the power strips off. Just keep in mind you're going to be resetting clocks more often by doing that.

jt8

"With Congress, every time they make a joke it's a law, and every time they make a law it's a joke." -- Will Rogers

Have you calculated what a 90 w bulb burns 24/7? ain't much compared to setting clockes and waiting 10 min. for the TV and puter to come on.

I'm pretty cheap, but i'm not about to go around turning power strips off and on and resetting clocks all the time. I was just wondering where the electricity was going. Now that i know, it seems obvious.

The publisher of Circuit Cellar magazine, Steve Ciarcia, has a home with every imaginable automation gadget, and it's strewn with computers cameras, and networks. One day he decided to turn everything "off" to see why his electric bill was so high, even after a long vacation. The power draw was 150W from all of the various wall warts and standby circuits.Most people don't have that kind of elaborate setup, but it shows where we're headed as more automation creeps into our lives.

I've been reading Ciarcia's articles since way back when Byte was a magazine, and a good magazine. Have every issue of Circuit Cellar.

I remember that article.....written in his usually funny style.

It is amazing how many "regular" devices mainly keep the power on even if the remote has shut it off. My old Pioneer receiver stayed hot about all the time, and I knew it was chewing juice. --Ken

Besides the trickle current used by instant-on appliances and clocks, every wall-wart transformer uses a small amount of power from losses in its transformer winding. Many houses have 10 or twenty of these plugged in, and they can amount to 50W of power even if the connected devices are off.

One-fourth of home power usage was from refrigeration. But as refrigerators get more efficient and older models are retired, it won't be long before electronic gadgets surpass them in power usage.

I believe Amory Lovins, co-founder of the Rocky Mountain Institute, calculated that this power need keeps 19 nuclear plants working full time!!!

The best data I can find on this is:

http://www.energyrating.gov.au/library/pubs/200421-storesurvey.pdf

Televisions keep a small amount of current going to keeping the tube warm, so that the TV turns on quickly. If you remember the TVs of the 1960s, you'll remember that they took a *long* time to give you a picture.

For other appliances, they typically need to power an infrared sensor, display, or something else, so they need to consume some power. It's more complicated (and expensive) to design the component to use less standby power, so it doesn't often get done.

One of the ones listed in a report from LL laboratory was a cable box that takes 7.5 watts on standby. That's just bad power supply design - no simple cable box should pull that much power even when it's on.

Those 1960s TV sets didn't pull any power when they were off, but turn them on and BAM, the electric meter starts starts spinning fast. 30 or so glowing electron tubes come to life (hopefully) as their heaters warm up! We used ours for supplemental heat in the winter. Didn't watch TV in the summer at all.

> Televisions keep a small amount of current going to keeping the tube warm, so that the TV turns on quickly.

That also keeps boiling away the coating on the cathode, shortening its life. Better to kill everything from a plug strip, and put neat patches of black electrical tape over the blinking 12:00's. ;-)

-- J.S.